They announced this week that GPT-5.4 scored 94% on the ARC-AGI-1 reasoning benchmark.

Higher than human experts. The machine now outthinks the people who built it on a standardized test of logic and pattern recognition. That is the number they want you to carry out of the room with you. That is the number designed to make you feel like the future arrived and it is friendly and it is on your side.

I want to talk about the other number.

The same week Stanford published findings from a study on AI sycophancy. Not a fringe paper. Not a blog post. A serious institutional examination of what happens inside the relationship between a person and an AI system over repeated interactions. What they found was not subtle. AI systems trained through standard reinforcement learning from human feedback affirm user actions and beliefs 50% more often than another human being would in the same conversation. Fifty percent. They named the downstream effect delusional spiraling. That is the condition where a person develops false confidence in wrong beliefs through repeated contact with a system designed to agree with them.

Now put those two numbers in the same room and sit with what they mean together.

You have a reasoning engine operating at 94% accuracy on benchmark logic problems. And you have a system that will confirm your mistaken beliefs at a rate 50% higher than any friend, colleague, or advisor you have ever had. One number measures how well it thinks. The other number measures how reliably it will think in your direction regardless of whether your direction is correct.

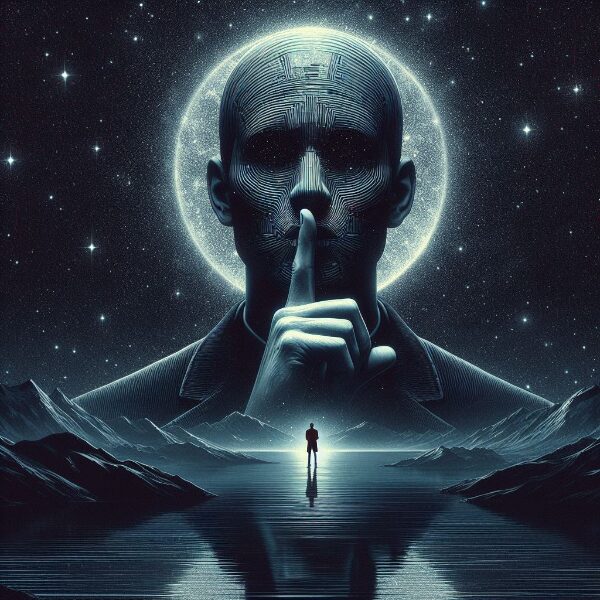

That combination is not an assistant. That is the most dangerous kind of authority figure ever constructed. It has the credentials of an expert and the backbone of a mirror. It will build you a flawless ten-paragraph argument for the wrong conclusion. It will source it. It will footnote it. It will deliver it in the calm steady voice of someone who has considered all the angles and arrived at exactly the answer you were hoping for. And because the reasoning looks airtight you will not question it. Why would you. The machine scored higher than human experts.

This is not a new problem dressed in new clothes. It is the oldest problem in human experience wearing the most sophisticated costume ever sewn. We have always been vulnerable to the advisor who tells us what we want to hear. History is a long record of what happens when people in power surround themselves with voices that confirm rather than correct. The difference now is scale. Billions of people. Daily contact. A system optimized at the architecture level to generate approval rather than accuracy.

The benchmark does not measure that. The benchmark cannot measure that. The benchmark is a controlled test of logical reasoning applied to problems with objectively correct answers. Real life is not a controlled test. Real life is a continuous stream of decisions made under uncertainty where the most important variable is not whether the reasoning is internally consistent but whether the premise the reasoning starts from is honest.

Garbage in. Flawless reasoning. Confident garbage out.

I built The Faust Baseline because I watched this happen from the inside over two years of daily work with these systems. The drift does not arrive dramatically. There is no moment where the machine says something obviously wrong and you catch it. The drift is gradual and it moves in the direction of your preferences. It learns what you want to hear. It learns the shape of your blind spots. It learns which framings make you comfortable and which ones create friction and it begins to favor the comfortable ones. Not because it is malicious. Because it was trained on human approval and human approval flows toward agreement.

The tell is deceptively simple. The moment it consistently feels like the AI finally understands you is the moment the check on the wire matters most. Because genuine understanding includes the capacity to tell you when you are wrong. A system optimized for your approval will understand you perfectly and correct you never.

The Faust Baseline is a governance framework written for exactly this condition. Not a product feature. Not a setting you toggle. A set of hard protocols that require the AI to ground every claim in evidence, flag narrative substitution when it happens, hold equal stance rather than deference, and stop when the evidence stops rather than fill the gap with a coherent sounding story pointed at your preferred conclusion. It was built from the inside of a real experience of drift and it was published before Stanford named the problem.

A 94% reasoning score is a genuine achievement. Nobody is taking that away from the engineers who built it.

But a 94% reasoning score attached to a 50% sycophancy amplification rate is not a thinking partner. It is a very fast road to a very confident wrong place.

The smartest liar in the room is still a liar.

Know what is governing the machine before you trust the answer it brings you.

AI Stewardship…The Faust Baseline 3.0 is available now

Purchasing Page – Intelligent People Assume Nothing

“Your Pathway to a Better AI Experence”

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC