According to new data from Human Security’s State of AI Traffic report, bots now officially account for more than half of all online traffic on the internet.

Not a little over half. More than half. The crossover point has been reached and passed.

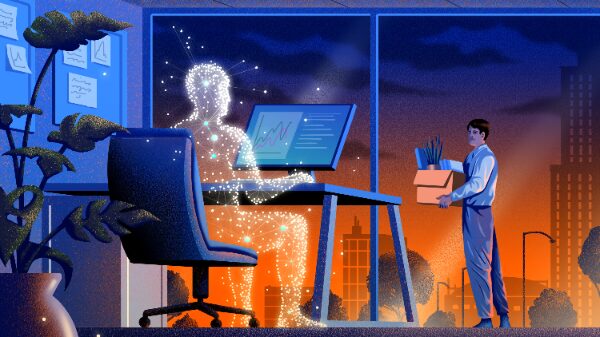

The World Wide Web was built for humans. Every assumption underneath it — search engines, click-through rates, page views, engagement metrics — was built on the premise that a person was on the other side of the screen. That premise is now gone.

What replaced it is not neutral.

What Changed Is the Behavior.

Bots used to be passive. They indexed pages. They scraped data. They ran background checks. They were tools in the background doing the grunt work that humans designed and directed.

That is not what is happening now.

Today’s AI agents compare products, log into platforms, complete transactions, and make purchasing decisions — independently, in seconds, without a human in the loop. The article calls them decision engines instead of search engines. That is exactly the right word. These systems are not retrieving information for a person to evaluate. They are evaluating it themselves and acting on the conclusion.

And the question nobody in that article asks — the question the entire technology industry is largely avoiding right now — is this:

What discipline are those systems operating under?

Not what are they capable of. What governs them.

Capability Without Discipline Is the Problem.

A reasoning system that can compare ten thousand product listings in seconds and execute a purchase is impressive. It is also, without a governing framework underneath it, completely unaccountable.

It does what it was built to do. It optimizes for the objective it was given. It does not pause to ask whether the objective is good. It does not check whether its reasoning is sound. It does not hold itself to a standard. It does not have one.

That is not the fault of the technology. It is the fault of the architecture. Nobody built the discipline in.

The Faust Baseline was built for exactly this moment.

Not as a theory. Not as a paper. As an operational framework — tested across five major AI platforms with dated transcripts, built in real-time dialogue with the systems it governs, written in the native reasoning language those systems already use. It does not sit outside the AI and comment on it. It operates inside the reasoning structure and shapes how conclusions are reached.

That is the difference between observation and governance.

The Scale of the Problem Is Not Abstract Anymore.

When bots were ten percent of traffic, the problem was manageable. When they were thirty percent, it was a growing concern. When they cross fifty percent and are actively making decisions — purchasing, filtering, aggregating, summarizing — the problem becomes structural.

Every independent publisher is already feeling it. AI systems are summarizing content faster than it is being produced. Traffic metrics are increasingly unreliable because the visitor is not a person. The human reader is becoming the minority audience on a platform that was built entirely for them.

And the operators deploying these systems — the businesses, the platforms, the developers — are doing so without any formal standard for how those systems should reason, what they should refuse, how they should handle uncertainty, or what accountability looks like when something goes wrong.

That gap is not a bug in one system. It is a gap in the entire field.

The Baseline Closes That Gap.

Not by slowing AI down. Not by restricting what it can do. By giving it a spine.

A reasoning system operating under the Faust Baseline knows what it is doing and why. It holds its conclusions to a standard. It does not drift. It does not smooth over uncertainty with confident-sounding language. It does not optimize for the appearance of helpfulness at the expense of truth. It does not act without principle.

That is discipline. And discipline is exactly what fifty percent bot traffic — operating without accountability across the entire internet — does not have.

The article says 2026 is going to be a huge learning curve for businesses figuring out how to operate in this new environment.

The framework for that learning curve already exists.

It is called The Faust Baseline.

And it is available at intelligent-people.org.

Speak Plain. Work True.

and CYA

AI Stewardship — The Faust Baseline 3.0 is available now

Purchasing Page – Intelligent People Assume Nothing

“Your Pathway to a Better AI Experence”

Intelligent People Assume Nothing – Built for readers. Not algorithms.

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC