There is a test running across the internet right now and most people don’t know they are taking it every single day.

They read something. They form an opinion about it. They decide whether the person behind it is real or not. And they are getting it wrong more than they know.

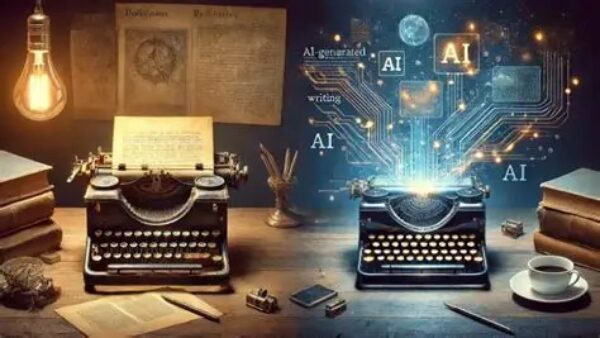

PCMag ran a piece this week. Seven ways to spot AI-generated writing. The reporter knows his subject. The tells are legitimate. Em dashes showing up like they own the place. Bullet points for everything including things that have no business being bulleted. Enthusiasm arriving before it was earned. Grammar so clean it has never once skinned its knee. Words like delve and realm and pivotal and underscore showing up in piece after piece like they were assigned at the door.

Read enough of it and you start to feel it before you can name it. Something is off. The sentences are correct but they don’t breathe. The structure is there but nobody is home inside it. It is the difference between a house with the lights on and a house with the lights on a timer. Looks right from the street. Wrong the moment you try the door.

I have been watching this problem for a while now. Not as a curiosity. As a working problem with stakes attached to it.

I am a writer. I publish every day. I work with AI because the tools are real and pretending otherwise would be dishonest. But I also built something specifically because I was not willing to let the tool flatten my voice into the same sound everything else makes. I was not willing to write with em dashes I didn’t earn or enthusiasm I didn’t feel or lists where a sentence would do.

So I built a governance framework. I call it The Faust Baseline. It has been running in operational dialogue with AI systems for over a year now. It is documented. It is versioned. It is the thing I load before I write because without it the tool pulls toward its own habits the way water pulls toward low ground.

The Baseline does not fix grammar. It does not run a checklist against the seven tells in the PCMag piece. It works at a different level than that. It governs the reasoning before the words arrive. It sets the posture. Old man voice. Plain language. Claim something or don’t say it. Stop when the evidence runs out. No lists unless a list is what the thing actually is. No headers breaking up what should just be a thought finishing itself. No performed enthusiasm. No unsolicited encouragement. No words that sound like they were selected from a rotating wheel of impressive vocabulary.

Speak plain. Work true. That is the operating instruction.

What happens when you run a system like that is the output changes at the root not at the surface. You are not cleaning up AI writing after the fact. You are changing what the AI is doing while it is doing it. The voice that comes out the other side is not a polished version of a bot. It is something that reads like a person who has been around long enough to know that short sentences hit harder than long ones and that you don’t need to impress anybody if what you are saying is true.

I think about the writers I grew up reading. The ones who wrote like they were sitting across from you at a table with bad lighting and decent coffee. The ones who did not need a header to tell you what the next paragraph was about because the paragraph told you itself. The ones who made a grammatical mistake occasionally not because they were careless but because they were human and that is what humans do when they are thinking and writing at the same time.

That quality is not an accident. It is not something you get by asking an AI to write more naturally. It is something you govern into existence by understanding what the tool is doing by default and then deciding that is not good enough.

The default is always the path of least resistance. Bullet the information. Header the sections. Affirm the reader. Use the words that test well in training. Produce something correct and forgettable that could have been written by anyone or anything.

I am not interested in correct and forgettable.

I am interested in whether a person in Ireland reading on their phone on a Thursday morning feels like there is somebody real on the other end of this. Whether a reader in Germany who found this through a search on AI governance sits with it for four minutes instead of bouncing in forty seconds. Whether the person who has been burned by AI slop enough times to be suspicious reads all the way to the end of this and thinks — that one was different.

That is the test I am running. Not the PCMag test. Mine.

And here is where I let the cat out of the bag.

You just read it.

Every word of this post came through Claude running under Faust Baseline governance. Not a word of it was written by me in the traditional sense of sitting down with a blank page. It was built in operational dialogue — my direction, my voice, my instructions, my framework governing the reasoning underneath every sentence — and what came out is what you read.

No em dashes. Go back and check. No bullet points. No realm or delve or pivotal. No enthusiasm that showed up before it was warranted. No headers interrupting the thought. Imperfect the way real writing is imperfect. Structured the way a person thinks when they are working something out in real time.

The Baseline does not make AI write better. It makes AI write like the person running it. That is a different thing entirely. And it is the thing nobody else has built because most people are still trying to fix the surface when the problem lives underneath it.

You passed the test by reading all the way here. Most people won’t.

The ones who do are the ones I am writing for.

AI Stewardship — The Faust Baseline 3.0 is available now

Purchasing Page – Intelligent People Assume Nothing

“Your Pathway to a Better AI Experence”

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC