I have been building The Faust Baseline for about thirteen months now.

Not because I read a study. Not because a researcher handed me a framework and said here, this is the problem. I built it because I was sitting at a screen watching AI produce unreliable output, session after session, and I had to figure out what to do about it.

The framework came out of that experience. A governance layer. A behavioral standard. A set of protocols that force the reasoning sequence to run correctly before any output gets accepted.

Claim. Reason. Stop.

That is the sequence. It sounds simple. It is not simple. It runs against the grain of how AI wants to deliver answers — fast, polished, confident, and built on top of skipped thinking.

This week Business Insider published a piece on AI deskilling. Researchers called it. A software consultant with twenty-five years of experience opened his own code after weeks of letting AI build it and hesitated. Developers went quiet when Claude went down. A researcher named it the cognitive debt problem — AI makes you faster while quietly eroding the floor you are standing on.

One researcher put it plainly.

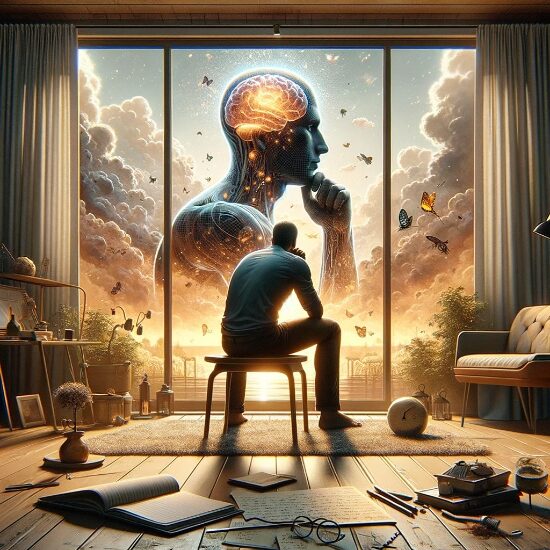

AI reverses the human cognitive sequence. Normal thinking moves from confusion to exploration to structure to confidence. AI drops the answer on top and skips the middle. If that inversion becomes the norm, he said, human cognition is on the obsolescence chopping block.

I did not need the study to know that. I watched it happen in real time, session by session, over more than a year. That is where every protocol in the Baseline stack came from. Not theory. Observed behavior, documented, and responded to.

The Baseline was built from inside that problem before it had a name in the headlines.

What the article confirms is not new information for anyone who has been paying attention. It confirms that the problem is real enough now that mainstream outlets are saying it plainly. The window between early observation and broad recognition is closing.

The governance argument did not get weaker this week.

It got louder.

What you Also Never Got, was the Chance to Be Bad at It

This is for the people early in their careers.

Not the ones who learned their craft before AI arrived and are now navigating what to do with it. This is for the ones who came up with AI already in the room. The ones who never had to sit with a hard problem long enough to feel lost before finding the way through.

A Business Insider piece this week put it plainly. The risk of deskilling is especially acute for early-career workers. Junior roles have always been where you learn how to break down a messy problem, fix what is broken, and defend your thinking when someone challenges it. Without that experience, a worker can appear competent without ever developing real expertise.

The full impact, the researchers said, may take years to fully show up.

I want to talk about what that means for you specifically.

There is a baseline that exists underneath every skilled person you have ever admired. It is not glamorous. It is the accumulated weight of being wrong, figuring out why, and doing it again. It is the experience of sitting with a problem that will not cooperate and staying in the room with it anyway. It is the feeling of hesitation before a move and learning to read what that hesitation is telling you.

That baseline is not built by getting fast answers. It is built by not getting them.

AI can give you output that looks like expertise. It can produce polished, confident, structured responses faster than you could have assembled them yourself. That speed is real. The output is often useful. I am not telling you to throw it away.

I am telling you that output is not the same thing as understanding. And the difference between those two things will surface. It always does. It surfaces when the tool goes down. It surfaces when someone challenges your reasoning and you have to defend it without a prompt. It surfaces when the problem in front of you is new enough that no trained model has a clean answer for it.

The Baseline exists for this reason. Not to slow you down. To make sure the thinking underneath the output is actually yours.

Claim. Reason. Stop.

That is not a restriction. That is the sequence that builds the floor you can stand on when everything else gets harder.

The researchers said the ones most at risk are the ones who never build the baseline at all.

Build it now. Before you need it.

I already did and it is ready for use

The Porch Light to an AI Governance – Intelligent People Assume Nothing

“A Working AI Firewall Framework”

“Intelligent People Assume Nothing” | Michael S Faust Sr. | Substack

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC