Where there is fire there is a firehose. When there is access to it.

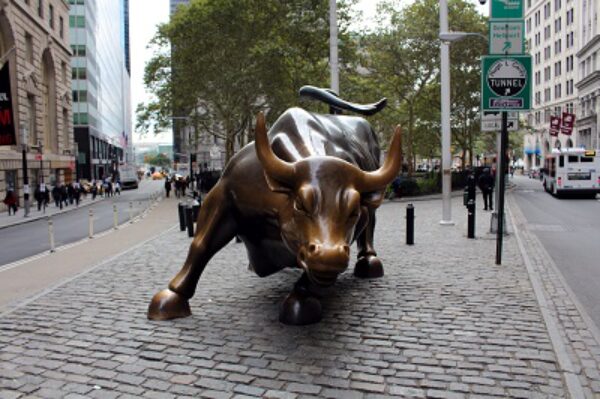

Lloyd Blankfein spent decades at Goldman Sachs learning one thing above everything else. How to manage risk before it manages you. He sat through the 1987 crash. The dot-com bust. The 2008 financial crisis. He watched Wall Street rebuild itself from the wreckage each time. So when a man with that resume sits down and tells you what worries him about AI it is worth putting down whatever you are doing and listening.

It is not robots. It is not superintelligence. It is not science fiction.

“We don’t have the ability to test whether it’s right or not.”

That is the fire.

Blankfein described watching AI execute at a scale no human trading floor ever could. Seventy thousand transactions while you are looking the other way. Everything whirring behind the scenes. No way to see the thought process. No way to verify the output before it lands. On the old trading floor every mistake was audible. The room went quiet at the smallest slip. Now the room is silent whether something is wrong or not. And that silence is the danger.

Goldman Sachs has spent billions deploying AI across trading, compliance, and back office operations. And the man who built that institution just said in plain language that they cannot tell if it is right.

That is not a small admission. That is the most precise description of the governance gap anyone in finance has put into words.

The Reckoning Is Already Priced In

Dan Niles has been in this business long enough to know what a boom looks like from the inside and what it looks like just before it breaks.

He is not calling the end of AI. He is calling the end of the first chapter. His prediction is direct. AI stocks could fall thirty to fifty percent in early 2027. Not because AI stops working. Because the market overbuilt on enthusiasm before the governance caught up with the capability.

He compared this moment to 1997 and 1998. Not the dot-com peak. The infrastructure boom that came just before it. The companies building the pipes were real. The money flowing into them was not always rational. And when the sentiment turned it turned fast.

The companies that survived that reset were not the ones with the biggest valuations. They were the ones that could show their work. Could demonstrate that what they built actually held up under pressure. Could answer the question Blankfein is asking right now.

Is it right. Can you test it. Can you prove it before it costs you everything.

Most of them could not answer that question in 2000. Most AI deployments cannot answer it today.

That is the reckoning. And it is already priced in for anyone paying attention.

The Baseline Is The Firehose

Here is where the two stories connect.

Blankfein named the problem. Niles named the consequence. Neither of them named the answer. But the answer exists and it has been documented and published and sitting in plain sight for eighteen months.

You cannot govern what you cannot test. That is Blankfein’s problem stated simply.

The Faust Baseline is a testing framework built into the session itself. Not after the fact. Not in a quarterly audit. In the session. Before the output executes.

SVP-1 runs three verification questions on every substantive response before it goes out. Is this claim supported by evidence present in this session. Does this contradict anything established earlier. Is the confidence level proportional to the evidence actually present.

That is the test Blankfein said Goldman does not have.

CHP-1 gives the operator a standing right to challenge any output at any time. The AI argues against its own response before the operator does. Names the weakest point. Names where agreement bias may have shaped the conclusion.

That is the audit Niles said the market is missing.

RTEL-1 stops any response the moment it conflicts with the governance standards the operator established at session open. Not after the seventy thousand transactions. Before the first one that does not belong.

That is the silence Blankfein described on the old trading floor. The room going quiet before the mistake completes. Built into the framework instead of waiting for someone to notice.

The Baseline does not promise perfection. No governance framework does. What it promises is that the AI working with you is anchored to your standards and that the gap between what it outputs and what you authorized is visible before it costs you.

That is the firehose. And unlike the fire it does not arrive after the damage is done.

Goldman Sachs is asking the right question. The Baseline has the architecture to answer it.

Look. Listen. Act.

“The Faust Baseline Codex 3.5”

”AI Baseline Governance”

Post Library – Intelligent People Assume Nothing

“Your Pathway to a Better AI Experence”

Purchasing Page – Intelligent People Assume Nothing

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC