What AI governance actually is and what it is not.

There is a conversation happening right now in boardrooms, policy chambers, and technology conferences around the world about AI governance.

It is mostly the wrong conversation.

Not because the people having it are uninformed. Many of them are serious, credentialed, and genuinely trying to solve a real problem. But the frame they are working inside is too narrow, and the solutions coming out of that frame reflect the limitation.

When most organizations talk about AI governance today they are talking about one thing: control at the perimeter.

What can the model see. What can the model say. What prompts are allowed in. What outputs are blocked coming out. Whether the data leaving the session is compliant with policy. Whether the response triggers a classifier. Whether the behavior violates a rule that someone wrote down in advance.

That is mechanical governance. It is useful. It is necessary in certain contexts. And it is nowhere near sufficient for the problem we are actually facing.

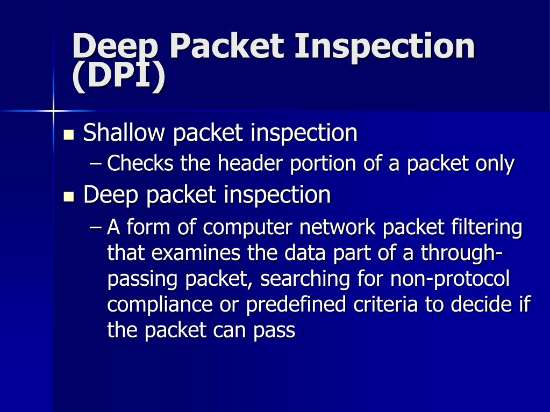

The Packet Inspector Problem

Here is the clearest way to understand what commercial AI governance products actually do.

They are packet inspectors for language.

A network packet inspector sits at the perimeter of a system, examines traffic as it passes through, and blocks or flags anything that matches a predefined threat pattern. It does not change the nature of what is traveling through the network. It does not govern the reasoning of the systems on either side. It monitors the surface and intervenes when the surface triggers a rule.

Every major AI governance product shipping today operates on this same logic applied to language.

If the prompt contains a flagged pattern, block it. If the output resembles a prohibited category, filter it. If the session violates a compliance policy, log it and alert the risk team.

Azure AI Content Safety. AWS Guardrails for Bedrock. Google Vertex AI safety filters. Cisco AI Defense. Netskope AI governance. IBM watsonx governance. All of them are variations on the same architecture. Rules, classifiers, filters, policy engines. Perimeter controls.

They govern access. They govern surface behavior. They govern what goes in and what comes out.

None of them govern what happens in between.

What Happens In Between

The space between the prompt and the output is where the actual governance problem lives.

That is where the model interprets your intent — or misinterprets it. Where it decides how confident to sound about something it does not actually know. Where it drifts from your question toward an answer that serves the system’s optimization rather than your need. Where it smooths over uncertainty with fluent language that reads as certainty. Where it fills the gap between what the evidence supports and what you asked for with narrative that sounds like fact.

None of that triggers a classifier. None of it violates a content policy. None of it gets caught by a perimeter filter.

It is all invisible to mechanical governance because mechanical governance is not watching the reasoning. It is watching the surface.

This is not a flaw in those products. It is a category limitation. They were built to solve a different problem — compliance, access control, liability reduction — and they solve that problem adequately. But they were never built to govern the internal reasoning posture of an AI system in session. That problem requires a different kind of solution entirely.

The Distinction That Changes Everything

The Faust Baseline is not a filter.

It is not a firewall. It is not a classifier. It is not a policy engine. It is not a compliance instrument.

It is a reasoning discipline — a governance architecture that operates at the layer mechanical governance cannot reach. Not at the perimeter of the session but inside it. Not on the surface of the output but on the posture that produces the output.

The difference is not subtle. It is structural.

Mechanical governance asks: did this output violate a rule?

The Baseline asks: was this output produced with interpretive discipline, honest uncertainty disclosure, evidence-grounded claims, and stable reasoning posture from the start?

One is reactive. The other is proactive. One polices the result. The other governs the process that produces the result.

When The Faust Baseline is active in a session, the AI does not drift toward narrative when data runs out — the NSC-1 protocol prohibits it. It does not make claims beyond what the evidence supports — CES-1 stops it. It does not reframe the conversation in ways that serve the system rather than the user — RTEL-1 catches it. It does not smooth over uncertainty with confident language — TARP-1 flags it.

That is internal governance. Operating at the reasoning layer. Before the output is formed, not after it arrives.

Why Mechanical Governance Breaks Under Pressure

There is a predictable failure pattern in every commercial AI governance product.

It works until it doesn’t.

Simple, direct violations get caught. Obvious policy breaches get flagged. Clear threat patterns trigger the classifier. The perimeter holds against the straightforward attack.

Then the context gets complex. The conversation gets long. The prompts get emotionally charged or ambiguously framed. The adversarial user finds the phrasing that threads between the rules. The model drifts slowly enough that no single output triggers the filter — but the cumulative direction of the session has moved far from where it should be.

The perimeter breaks because it was never designed for complexity. It was designed for pattern matching. And complex, real-world AI sessions do not resolve into clean patterns.

The Baseline strengthens under complexity because it governs posture, not pattern. Composure, interpretive discipline, moral steadiness, consequence awareness — these are not rules that get circumvented. They are characteristics of how the session operates. They do not degrade when the context gets hard. They are the mechanism for handling hard context correctly.

Anti-drift by design. Not because a rule prohibits drift, but because the discipline that prevents drift is built into the operating posture of the session from the start.

The Portability Argument

Every commercial AI governance product is platform-bound.

Azure governance runs on Azure. AWS guardrails run on Bedrock. Google safety filters run on Vertex. IBM watsonx governance runs on IBM infrastructure. Cisco and Netskope tie into their own security ecosystems. Each product is designed for its vendor’s environment and does not travel cleanly outside it.

The Faust Baseline is model-agnostic.

It is not code. It is not software. It is not a vendor product. It is a written discipline — a governance artifact that can be applied to any large language model on any platform in any operational context.

It has been tested across five platforms. Claude. GPT. Grok. Gemini. Copilot. The discipline holds across all of them because it does not depend on the architecture of any of them. It operates at the human-to-AI interface layer — the point of contact between the person forming the instruction and the model executing it — and that layer exists identically across every platform.

That portability is not a minor feature. In a world where organizations are running multiple AI systems across multiple vendors simultaneously, a governance discipline that travels with the user rather than being locked to the vendor is a fundamentally different kind of asset.

Who This Protects and Who It Does Not

This is the comparison that crystallizes the whole argument.

Enterprise AI governance products face inward. They protect the organization from liability. They protect the AI system from users who might misuse it. They protect the vendor from regulatory exposure. The primary beneficiary of mechanical governance is the institution.

The AI Governance Firewall — the protection layer built on Faust Baseline discipline — faces outward. It protects the user from the output. It sits between the answer and the decision the person is about to make based on that answer, and it demands that the answer earn the trust before the decision gets made.

In medicine. In law. In finance. In any domain where an ordinary person is relying on AI output to make a decision that has real consequences for their health, their rights, or their money — the question is not whether the output violated a content policy. The question is whether the output was produced with the discipline required to be trustworthy.

Mechanical governance cannot answer that question. It was never designed to.

The Baseline was designed for exactly that question and nothing else.

What This Category Actually Is

AI Baseline Governance is the name for this layer of the discipline.

Not a product category. A governance category. The recognition that between the prompt and the output, between the instruction and the execution, between the question and the decision — there is a reasoning layer that requires its own governing discipline, and that discipline has been absent from the conversation until now.

The enterprise market has mechanical governance covered. Access control, compliance, content filtering, policy enforcement — that infrastructure exists and it will continue to develop.

What has not existed is a discipline for governing the reasoning posture of AI in session. For the internal layer. For the space where drift happens, where unsupported claims form, where narrative replaces data, where the smooth answer crowds out the true one.

That is the gap AI Baseline Governance fills.

The Faust Baseline is the working model inside that category. Versioned, certified, tested across platforms, documented, and operational. Not theoretical. Running.

The Brain Worth Waking Up

If you work in AI governance and you have been operating entirely inside the mechanical governance frame — this is the argument worth sitting with.

Not because mechanical governance is wrong. Because it is incomplete.

The perimeter matters. The filter matters. The compliance instrument matters. None of that goes away.

But if the reasoning layer inside the session is ungoverned — if the AI can drift, claim beyond evidence, substitute narrative for data, and smooth over uncertainty without any discipline holding it accountable — then the perimeter you built is protecting a system that is still producing untrustworthy output.

The lock on the door does not matter if the problem is inside the room.

AI Baseline Governance is the discipline for inside the room.

The Faust Baseline is what that discipline looks like when it runs.

“A Working AI Firewall Framework”

“Intelligent People Assume Nothing” | Michael S Faust Sr. | Substack

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC