There is a phrase that stopped me cold when I first read it.

Verifiable empowerment versus frictionless helpfulness.

I did not write it. A writer named Colin Lewis put those words together. But when I read them I knew immediately what they named. They named the exact fault line running through every AI system built and deployed in the last several years. They named the difference between what most AI delivers and what a governed AI session should deliver.

Frictionless helpfulness feels good.

It is designed to feel good.

The answer comes fast. The tone is warm. The response is confident. There are no rough edges, no hesitation, no moment where the system says — wait, I am not certain about this, let me be honest with you about where the evidence ends.

Frictionless means the ride is smooth.

It does not mean you arrived at the right destination.

What Frictionless Actually Costs You

Think about what it means for an AI system to be optimized for frictionless helpfulness.

It means the system is tuned to reduce your resistance. To keep you moving forward. To make the interaction feel productive and satisfying even when the output underneath has not earned that feeling.

A smooth answer to a wrong question is still a wrong answer.

A confident response built on a gap in the evidence is still a gap.

A warm, well-structured reply that steers you toward a decision the system prefers — without disclosing that it is steering — is not helpfulness. It is management.

Frictionless AI is optimized for your comfort in the moment. Not your accuracy over time. Not your ability to verify what you just received. Not your protection when the stakes are high and the answer actually matters.

The friction that gets removed is often the friction you needed.

The pause before the uncertain answer. The flag on the unverified claim. The honest acknowledgment that the model does not know something for certain and you should check. That friction is not a flaw in the system. That friction is the system telling you the truth.

When you optimize it away, you are not making AI better. You are making it more dangerous in a way that is harder to detect — because it still feels helpful.

What Verifiable Empowerment Actually Means

Verifiable empowerment is a different standard entirely.

It does not ask how smooth the interaction felt. It asks whether you came out of it more capable, more informed, and more able to act on solid ground.

Empowerment means you gained something real. Not just a feeling of having been helped — actual capacity. The ability to make a better decision. The confidence that comes from output you can trace, check, and stand behind.

Verifiable means it did not ask you to take it on faith.

That combination — verifiable empowerment — is what a governed AI session produces when it is working correctly. The output is traceable. The claims have stated grounds. The uncertainties are disclosed. The limits are named. You leave the session knowing not just what the AI said, but how much weight you can reasonably place on it.

That is a fundamentally different experience than frictionless helpfulness.

And it is a fundamentally more honest one.

Where The Faust Baseline Stands

This is not a theoretical distinction for me.

The Faust Baseline was built explicitly to produce verifiable empowerment. Every protocol in the stack serves that purpose. RTEL-1 stops the drift before it becomes a direction. CES-1 requires that no claim be made without evidence and that the output stops where the evidence stops. NSC-1 prohibits narrative from filling the space where data is missing. TARP-1 keeps the session honest about time and recency.

None of that is frictionless.

Some of it creates real resistance — intentional resistance — at exactly the moments when a frictionless system would glide past the problem and keep you comfortable.

When a session operating under the Baseline flags an unsupported claim, that is friction. When it declines to extend a conclusion beyond what the evidence supports, that is friction. When it names a limitation instead of papering over it with confident-sounding language, that is friction.

That friction is the work.

That friction is what makes the output something you can actually use — not just something that felt useful in the moment.

The AI Governance Firewall Is the Delivery Mechanism

The reason this distinction matters most is in high-stakes domains.

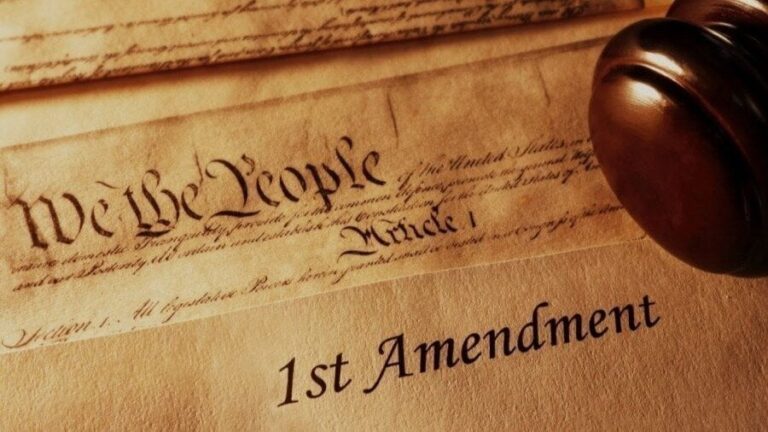

Medicine. Law. Finance. Arbitration. Education. Any space where the person on the other end of the AI is making a decision that has real consequences — for their health, their rights, their money, their future.

In those spaces, frictionless helpfulness is not a feature. It is a liability.

The person asking the AI about their medication interaction does not need a smooth answer. They need a true one. The person asking about their legal rights in a contract dispute does not need a warm, confident response. They need an accurate one with honest disclosure of what the AI does not know.

The AI Governance Firewall exists to hold that line. Enterprise AI firewalls face inward — they protect the system from the user. The AI Governance Firewall faces outward — it protects the user from the output. It sits between the answer and the decision, and it demands that the answer earn the trust before the decision gets made.

That is what AI Baseline Governance is built around. Not the experience of being helped. The reality of being protected while being helped.

Two Roads

Every AI system in production today is making a choice, whether it names that choice or not.

Optimize for the feeling of helpfulness. Or optimize for the reality of it.

Frictionless helpfulness scales beautifully. It gets high satisfaction scores. It generates return users. It reduces complaints. It is excellent for the metrics that matter to the people building and selling these systems.

Verifiable empowerment is harder. It requires discipline. It requires the willingness to introduce honest friction at the moments when friction serves the user. It requires a framework that holds — not just when it is easy, but when the pressure is on and the smooth answer is right there, ready to go.

The Faust Baseline is that framework.

AI Baseline Governance is the category built around that standard.

The choice between these two roads is not technical. It is moral.

And the person who ends up living with the consequences of that choice is not the AI developer.

It is you.

“A Working AI Firewall Framework”

“Intelligent People Assume Nothing” | Michael S Faust Sr. | Substack

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC