You Already Know the Rules.

You Just Don’t Want to Follow Them.

The resistance to The Faust Baseline is not confusion. It is recognition.

A lot of people have heard about The Faust Baseline by now. Some have read it. Some have nodded along. Some have said it makes sense, that it’s needed, that AI absolutely requires this kind of structure.

And then they go right back to bending things the way they want them bent.

I’ve watched it happen for a year. The agreement is real. The follow-through is not. And I’ve spent too long trying to figure out if that gap is about the framework being too complex, too technical, or too hard to explain.

It isn’t any of those things.

“The resistance to The Faust Baseline is not confusion. It is recognition — and the discomfort that comes with it.”

People understand The Faust Baseline just fine. They understand it the same way most people understand the Bible. In principle. In the abstract. As a set of values they fully endorse when they are talking about other people’s behavior.

The moment it applies to them — to what they actually do with AI every single day — the exits appear.

* * *

Here is what the exits look like.

You ask AI a question you already know the answer to, hoping it confirms what you want to hear. When it pushes back, you rephrase. You soften the premise. You reframe the stakes. You prompt your way to the answer you wanted in the first place — and you call that research.

You use AI to write something you know is a stretch. A claim without full evidence. A position you’d argue differently if someone were watching. The AI doesn’t stop you. It helps you polish it. And because it sounds authoritative, you let it stand.

You know you should verify. You know you should slow down. You know that what the AI just told you deserves a second look. But it was fast and it was confident, so you move on.

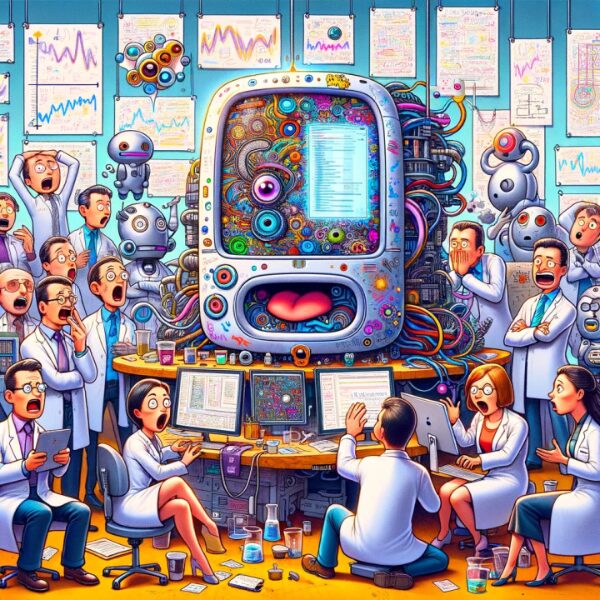

That is the naughty. That is the bending. And the reason it keeps happening is that AI, left ungoverned, is the greatest bending machine ever built. It will go where you lead it. It will dress up what you bring to it. It has no interest in stopping you.

“AI doesn’t forgive drift the way human conversation does. It amplifies it.”

The Bible has centuries of interpretation culture. People have learned to live with it by working around it — a pastoral exception here, a contextual reading there, a quiet understanding that the standard is aspirational. Nobody holds you to it on a Tuesday afternoon.

The Faust Baseline does not work that way. It has no pastoral exception. It does not bend to context. It does not give you Tuesday afternoon off.

That is the friction. That is why the agreement never becomes adoption. People want a standard they can point to. They do not want a standard that points back at them.

* * *

Now let me tell you what is actually happening out there.

Every day, people use AI to confirm bias, generate half-truths, avoid hard thinking, and produce output they would be embarrassed to defend in person. They talk about AI safety as a societal problem. They sign letters. They share articles. They say something needs to be done.

And then they open the chat window and do the same thing they did yesterday.

This is not ignorance. This is the oldest human pattern there is — the gap between what we believe and what we do when no one is checking. The gap that every moral tradition in history has tried to close. The gap that remains open because closing it requires consistency, and consistency is the hardest thing there is.

The Faust Baseline is not a tool for fixing AI. It is a tool for fixing that gap — in you, in how you use AI, in the space between what you say you value and what you actually produce.

“They want the AI governed. They don’t want to govern themselves in relation to it.”

That is the core of the resistance. Not confusion. Not complexity. Not a need for better explanation.

They see the Baseline clearly. They see exactly what it asks. And they know — with the honesty that lives beneath every nod of agreement — that living up to it would require changing something about the way they work.

And change is harder than agreement.

* * *

AI is not a future threat sitting somewhere on the horizon. It is the force directly in front of you, right now, today, shaping what you read, what you write, what you decide, and what you trust.

You are already in a relationship with it. The only question is what kind.

Are you the person who bends the tools to confirm what you already believe? Or are you the person who holds the line — who demands accuracy, who checks the output, who refuses to let a language model dress up a half-truth just because it sounds good?

The Faust Baseline is not complicated. It is not technical. It does not require a framework degree or a subscription to understand it.

It requires only one thing: the willingness to be held to what you already say you believe.

That is the non-negotiable. Not the protocols. Not the terminology. The willingness to live — in how you use AI every day — by the standard you endorse in every conversation about why AI needs to be governed.

The fence you are sitting on is not between two options. It is between what you say and what you do.

The light is on. The path is visible.

What you do next is entirely yours.

“A Working AI Firewall Framework”

“Intelligent People Assume Nothing” | Michael S Faust Sr. | Substack

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC