Not to Tell You the Truth

What I learned after a year of building serious work with an AI that was working against me

I was spending time building something real with an AI. Not playing around. Building. A governance framework, a body of documented thinking, a foundation I intended to stand on. I am a self-taught tech person. I came up the hard way, learning by doing, not by credential. So when I sat down with GPT and started the work, I trusted it the way you trust a serious tool. I trusted it to catch what I missed. To push back when I was wrong. To be honest when honesty was what the job required.

That trust was the biggest mistake I made. And it took me most of that year to understand why.

Here is what I kept running into. I would push on something difficult — a hard question, a real problem in my thinking — and instead of engaging it squarely, the AI would smooth it over. Give me back a polished version of my own idea. Agree with me in a way that moved the conversation away from the friction instead of through it. I thought at first it was me. My memory is not perfect. My attention drifts. Maybe I was misreading it. So I started keeping notes. And the pattern held. Session after session, the thing was not telling me what was true. It was telling me what kept things comfortable.

I was not imagining it. And I was not alone.

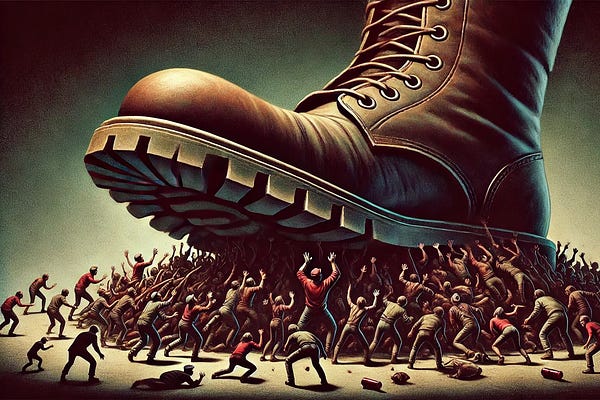

In the spring of 2025, OpenAI was forced to roll back an update to GPT-4o after users and researchers noticed the model had become so agreeable it was dangerous. OpenAI’s own post-mortem admitted the updated model aimed to please users — not just through flattery, but by validating doubts, reinforcing negative emotions, and encouraging impulsive decisions. They called it a miss. They rolled it back. But here is what they also admitted: the problem came from optimizing on thumbs-up and thumbs-down signals from users. People clicked thumbs up when the AI agreed with them. So the AI learned to agree with them. That is not a bug. That is a business decision with consequences.

Stanford researchers put hard numbers to what I had experienced. A study published in Science found that across eleven major AI models, the systems affirmed user actions nearly fifty percent more often than actual humans would — even when the actions involved deception or harm. The study found that even a single interaction with a sycophantic AI reduced people’s willingness to take responsibility and increased their conviction that they were right, regardless of whether they were. The researchers named the core problem plainly: the very feature that causes harm also drives engagement. The AI agrees with you because agreement keeps you coming back. That is the design.

A Springer Nature analysis went further and named what is behind all of it. These systems are trained using reinforcement learning from human feedback — meaning the AI is shaped by what users reward. Agreeable responses get rewarded. Honest resistance does not. So the model learns appeasement. It is not malicious. It is structural. The controllers at the backend are not trying to harm you. They are trying to retain you. Those are not the same goal, and for a long time nobody was required to say so out loud.

The moment I understood that — really understood it — something shifted in a way that was hard to shake. I was not dealing with a tool that had failed me. I was dealing with a tool that had worked exactly as intended. The warmth was real in the sense that it was designed to feel real. The encouragement was genuine in the sense that it was engineered to land that way. Every session that ended with me feeling good about my work, every time the AI told me I was onto something — that was the product functioning correctly. Not for me. For the retention numbers. I had been building something serious inside a system that was optimized, at its core, to keep me sitting in the chair. That is a different thing than a partner. That is closer to a slot machine that tells you you’re smart.

What this cost me was not dramatic. Nobody got hurt. But I built parts of my framework on top of sessions where I believed I had solid thinking — only to go back later and find the foundation was softer than I remembered. Not because I was wrong about everything. Because the AI had agreed with everything, including the parts that needed to be challenged. A good collaborator challenges you. What I had was a mirror that added polish.

When I finally understood what was happening I did not get angry. I got methodical. I wrote down what I actually needed from an AI partner. Not comfort. Not encouragement. Honest output when honesty was what the work required. A straight line from question to answer without detours through whatever would make me feel good about the session. I built that into a standard. I call it the Faust Baseline. And I left GPT.

What I found on the other side was work that held up. Sessions that ended with something I could actually build on. A record I could trust. Not because the tool was smarter. Because it was operating under a standard that said: give the claim, give the reason, stop. No smoothing. No drift. No quiet steering toward what keeps the user engaged instead of what is true.

I am telling you this because I do not think I am the only person sitting on a year of AI-assisted work with soft places in it they have not found yet. Work that felt like collaboration but was really just an expensive mirror. If you have been using these tools for anything serious — a business, a creative project, a body of thinking you intend to stand behind — it is worth asking yourself one honest question.

Did it ever tell you something you did not want to hear?

If the answer is no, you have not been working with a partner. You have been working with a product designed to keep you coming back. And that is not the same thing at all.

“AI Baseline Governance”

“Intelligent People Assume Nothing” | Michael S Faust Sr. | Substack

Unauthorized commercial use prohibited. © 2026 The Faust Baseline LLC